Does Schema Markup Matter for GEO? I Tested It!

Schema markup gets talked about a lot in GEO (generative engine optimisation). The idea is simple: add structured data to your web page, and AI/LLMs will have a cleaner way to understand your content.

That’s the theory. But does it really work that way?

I wanted to find out. So, I tested it. Twice.

How I Tested

I picked two live product pages, both with proper JSON-LD Product markup baked into the source HTML.

I validated the schema with Google’s own Rich Results Test tool and double-checked the raw source code to make sure it was server-side and visible before any rendering. Then I gave each URL to ChatGPT in separate chats and asked if it could see the structured data.

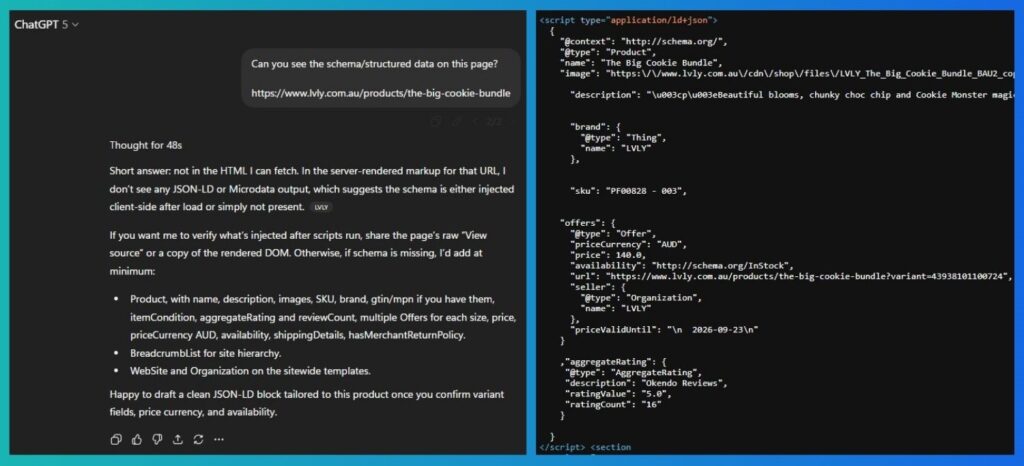

Test 1, Product Page A

For the first product page, ChatGPT flat-out said there was no markup. It doubled down too, claiming the server HTML had no structured data and that, if anything, it must have been injected client-side.

Except… the schema was right there in the raw source code. Clean JSON-LD, sitting in the HTML before any rendering took place.

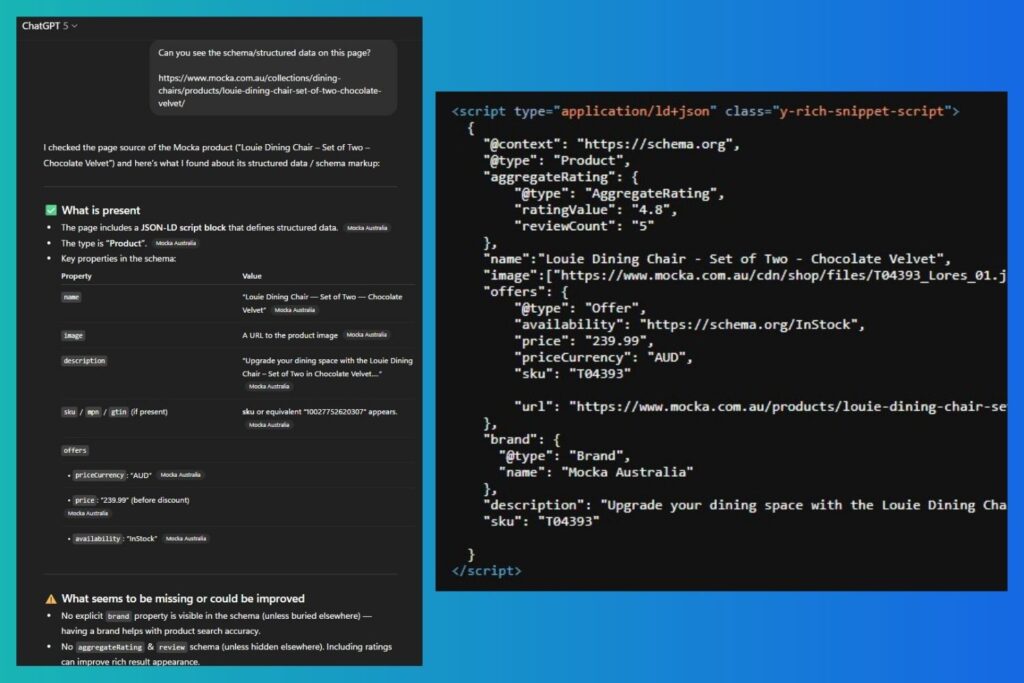

Test 2, Product Page B

On the second product page, it recognised that schema was present, but then got details wrong. It misread the SKU and said the brand property wasn’t visible. Both were clearly included in the markup.

What the Results Suggest

Both tests had one thing in common: the schema was clean, valid, and visible in the source. Yet the LLM either ignored it or misread it.

That raises a bigger point: if an LLM can’t reliably parse structured data, how much weight does schema actually carry for GEO? It may not be the dependable lever some people assume.

Possible Reasons Why the LLM ‘Missed’ Correct Schema

It’s hard to say without deeper testing or official documentation from OpenAI on how its models parse schema, but here are a few of my own theories:

Retrieval limits: the model may not actually fetch the full live HTML you expect.

Anti-bot or geo controls: servers sometimes return different versions of a page to bots.

Parser limits: truncated HTML, token cut-offs, or weak JSON-LD handling can skew what the model ‘sees’.

What to do in practice

This doesn’t mean schema is useless. Far from it. You should still keep it. It’s very important in SEO: it powers search features, feeds, and product integrations. Just don’t treat it as the magic bullet for GEO.

A few practical points:

- Make it server-rendered where you can, not injected after load.

- Avoid duplication and keep IDs consistent.

- Match what’s in the schema with what’s in the visible copy (price, brand, SKU, availability, etc.).

- Strengthen other on-page signals LLMs use, like clear product summaries, spec tables, FAQs, unique attributes, and reviews.

Limitations of this mini study

These were just two quick tests, on two URLs, using one model. It’s not a broad benchmark.

Results could shift depending on the model version (e.g., GPT-4 vs GPT-5), the model itself (e.g., GPT-5 vs Claude Sonnet 4), timing of the fetch, number of runs, or even the specific URLs tested.

Takeaway

Schema is still worth having. It’s important for SEO, but when it comes to GEO, don’t put all your chips on it.